Best Practices for Testing Your Web Site Before Going Live

So you’ve got your web space, your website is designed and you have some content – in fact you’re all ready to go live.

Or are you?

There are various things that need to be tested and checked before you can go live. Some of these will need to be done after the website has been uploaded to your server, but all should be checked and the correct changes made before people start visiting.

Many website administrators are keen on the tactic of “launching” their latest online projects, which gives you the chance to tighten things up a bit before people start visiting in their droves, as well as get feedback on things that don’t work. If you find testing any of the following best practices to be difficult or impossible, having a small group of trusted people check the website for you is a good option.

For the best results, these should all be checked before your website goes live or launches.

Cross Browser Testing

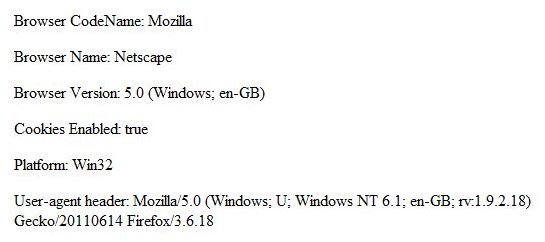

Your website might look great in Firefox, but how does it look in Internet Explorer, Safari or Opera? How does it resolve on a smartphone or tablet?

The differences between all of the popular web browsers mean that web pages will appear slightly different from device to device, and browser to browser. In some cases this isn’t a problem but, in many more, the issue requires alterations and additions to the CSS file to create a uniform look for all users. Expecting your users to update their browsers isn’t an option, as their operating system might prevent this. Instead, you will need to make sure your development team are aware of these issues.

Various tools are available for checking how your website will look in different browsers. Our Selection of the Best Browser Compatibility Tools features the best options that you can use.

Meta Data and Titles

Meta data is the collection of statements and keywords that are found in the header of your HTML page (whether pure HTML or generated by ASP or PHP) and used by search engines. The Meta Description is used to provide a description of the website that will appear in the search results; the Meta Keywords should be selected based on the content of your website.

While your website will still be found in most cases, it is a good idea to have these elements in place early on. For instance, your website title (which appears in the page header in

Favicons and Images

Checking every page of your website is vital when you first go live. One thing to look for is images, and this is another good reason for cross-browser checks, as missing images appear in different ways depending on your browser. For instance, Firefox will ignore the missing picture or icon, whereas Internet Explorer will display a red “X” where the image should be found.

If you are using a database-driven solution where images are handled in the database, then you can expect these to continue working as long as there are no problems.

Something else image-related that you should make sure you have early on is the favorites icon, or favicon. This is a small icon that appears next to your website in a readers list of bookmarks, as well as in the address bar and on browser tabs. They should be in .ico format for the best results across all browsers, and can originate as GIF, JPEG, PNG or SVG files.

A favicon saved in the website’s root directory is added as follows:

Checking Links and Content

Other things that you should be checking are links and content. As far as content goes, you should be using a spell check tool to make sure that there are no grammar and spelling errors. The www.spellcheck.net website is a good online tool for doing this, or you might copy and paste your content into Microsoft Word.

Similarly, you need to check that all of your links are active and valid. As with images, broken links might be due to the upload to the live hosting, or simply vestiges of pre-production design. Either way, you will need to spend time working through your website looking for dead or broken links and making the necessary changes. The W3C validator tool at https://validator.w3.org/checklink can help with this.

Forms and Scripts

Any forms that you have on your website should be checked for errors, either in the execution and submission of the data or for potential script attacks. This is where a string of characters are entered into the form so that, when submitted, your website can be compromised. Clearly this is a risk, which is why only securely-coded forms should be present on your website.

If you have any JavaScript or AJAX content on your website, this should also be tested, again on multiple browsers, to make sure that the content works as intended. Some users disable JavaScript in their browser, and as a result you will need to make sure that any errors are dealt with in a friendly manner. You might also opt to work around the lack of JavaScript in some browsers by hiding whatever feature is delivered by the scripting.

404 Error Pages

A slight error in a page URL, a dead link or even a brief database interruption can result in a 404 error page being displayed instead of your usual website. In these situations you don’t want to display “unfriendly” messages to your website visitors who will likely close the window when faced with an error. Instead, you can create custom 404 error pages that look like the rest of your website and offer alternative pages and tools such as a search page.

Sitemaps and Analytics

We’ve touched briefly on Meta descriptions and keywords above, but these things can only play a part in making sure your website is found by the right search engines. Content is the main draw to your site, and as the website grows you will need to deal with having a sitemap that grows along with it.

The benefits of this might not be obvious, but they are considerable. A search engine robot requires a sitemap in order to find the entire website’s content. If it can’t find everything then your site will not be indexed in the correct manner.

You may also wish to check that you have website analytics code in your webpages before the site goes live. This can be anything from standard hit counter code to something like Google Analytics, which can be used to get a clearer picture of the type of visitors you are attracting.

Does Your Website Validate to the Correct Web Standards?

If you haven’t done this already then you should be heading to validator.w3.org and making use of the website validator tool. This will check websites that are already live or can be used to validate files that are uploaded or pasted into the browser window.

W3C (the World Wide Web Consortium) are responsible for establishing web standards for layout, usability and accessibility, and their validator tool checks your website for all manner of coding errors and other problems.

Ideally this should be done quite early in the development process as there can be a lot of things to get through. As changes are made, further checks will be very beneficial.

Graceful Degradation and Progressive Enhancement

Graceful degradation is the process of providing versions of your website for less advanced browsers. For instance, as there are many computers still equipped with Internet Explorer 6, you might provide a version of your website’s CSS that is designed to allow readers with this browser to view your content. As you might imagine, this ties-in with cross browser testing

As great as this might sound, however, it is often an afterthought. The best solution is progressive enhancement, whereby a basic but functional version of the website is created for the older browsers, and the enhanced versions are developed for later browsers. This way, no one loses out because the developers decided to focus on readers with newer hardware.

Optimization

Finally, optimizing your online presence is vital, particularly for database-driven websites. Optimization is the process of making sure your website is fast and efficient, and avoids dragging down the server that it is running on.

There are many ways in which this can be done, from minimizing HTTP requests to compressing CSS and JavaScript. Any images on your website should be in JPEG format wherever possible, and compressed enough to load quickly.

Various software tools are also available to assist with caching, which keeps regularly-used data in the memory of your server.

Use Them All!

These ten best practices can provide you with a far more polished and impressive web presence than simply uploading your files and hoping for the best possibly can. If you have spent a lot of time on the website’s development and content already, skipping any of these steps will be doing you (and anyone else involved in the project) a massive disservice.

References

- Author’s own experience.

- Screenshots provided by author.