Google hasn’t always been the number one search engine on the internet, but now that it is, many people are interested in finding out how the Google search engine works. Let’s take a look!

Overview

Google is today’s largest search engine, and every webmaster strives to learn the secrets of search engine optimization to make their page rank the highest on Google’s results page. If you have ever wondered how Google determines which web sites are most deserving of the number one page rank, start here.

Google uses a technique known as “parallel processing” to allow them to run several different computations at one time, to speed up the data processing needed to appropriately run the search engine. This is done by using a network of several thousand low-cost computers. Google’s search engine consists of three main parts:

- GoogleBot: The web crawler

- Indexer: sorts every word on a page, and stores the results in a database.

- Query Processor: looks at your search string, compares to the results stored by the indexer, retrieves, and presents the list of most relevant results.

Now, let’s move on to take a closer look at each one of these three parts.

GoogleBot: Google’s Web Crawler

GoogleBot is the automated computer bot that crawls the internet to gather information and web pages for the index. By crawling the internet, we mean that it sends requests to all the servers hosting web sites, downloads copies of them, and then sends them off to the Indexer for processing. It works much faster than your internet browser, capable of sending thousands of these requests at one time. If you want to check out how GoogleBot sees your web site, take a look at this: Through the Eyes of the Google Bot.

GoogleBot adds pages through one of two ways:

- Crawling the web

- the Google addURL page (Try not to use this. Google will find you. Submitting with this method will likely cause Google to think you are spamming them, which is not a good thing!)

When it finds a web site it strips the links and keeps them in line for further crawling. Because of the depths that the GoogleBot can go to, many of these pages are only crawled once a month. More popular web sites get crawled more often as to keep the catalog and index current with site updates.

Indexer

The information from GoogleBot then gets passed on to Indexer. Indexer looks at every word on every page, and catalogs it in a database. Indexer takes a look at all the text sent over from GoogleBot and sorts it aphabetically for each search term. The index stores a list of documents where the term appears, along with the location in the text where the term appears. This allows Google to provide quick access to any document with a user search string.

The indexer skips common words such as “and”, “the” and certain single letter and number combinations to allow improved performance for Google. These words do little to actually narrow down the search, so this is why they can safely be taken out of the search query.

Query Processor

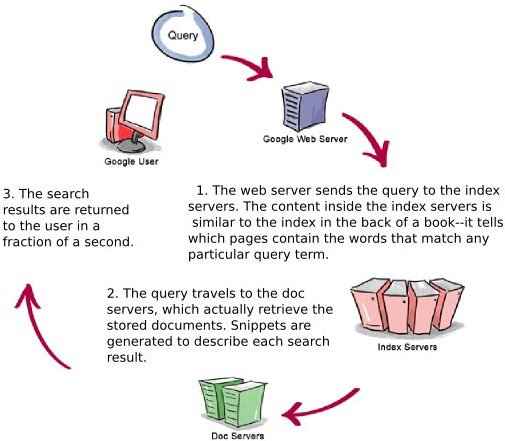

The information stored into the database by Indexer, is then called upon by the Query Processor when you enter your search string in Google. Using what Indexer provides it with, the Query Proccesor then displays your results. The Query Processor is made up of different parts to assist you in finding the best results for your search string.

There is of course the user interface where you enter the search string you want. Then, there’s the “engine” that searches through the results to provide you with the ones that they think you will find useful, and naturally, the results formatter that presents you with the results in a user-friendly manner.

The Page Rank Metric (detailed in part two of this series) is actually in the process of being patented by Google, so there will be secrets, but we do know that several things go into the calculation of which pages show up where on the results page.

The results formatter handles things like spelling errors, and is constantly tweaked to improve efficiency and performance.

How Google Works

Key Things to Remember

To quickly summarize:

- More frequent updates mean that your site will get crawled more often.

- Content is king! Keep it geared toward those keywords!

- Changes are made frequently on Google’s end to make sure they can continue to fight internet spam.