How Computer Video Cards Evolved Over the Years: A History of Graphics Cards

Video Card Evolution

Next only to memory, the video card is the most upgraded component in home computers. The video card started out from humble beginnings at a time when advances in computer graphics moved much slower than today. The modern video card fits well within the granular paradigm of home computers making upgrades a simple process. Read on to learn about the evolution of the video card in the home computer market.

Early Video Adapters

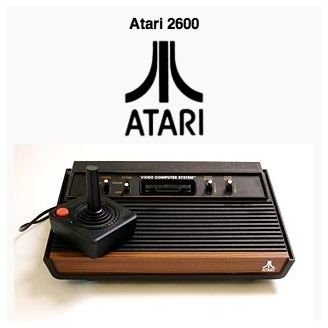

In the very early days of home computing, video adapters could not be removed from the computer and upgraded like today. Although not a home computer as we know it, the Atari 2600, for example, didn’t have a dedicated or separate video adapter. The main processor made all of the Atari 2600’s calculations. However, computers based on similar technologies such as the Atari 800xl and the Commodore 64 did have separate although not granular video adapters.

Later home computers in the early 1980s had dedicated graphics arrays that were hard wired to the computer’s main board. True home computer granularity was still a number of years away at this point. However, this granular paradigm gave rise to the video card.

Early Video Cards

The first true granular video cards were greatly underpowered by today’s standards. For example, the so-called Video Graphics Array (VGA) was only capable of displaying graphics at a resolution of 640x480 and rarely came with video memory beyond 256k to 512k.

Underpowered as they were, they made the video game manufacturers take notice of the PC as a major contender for a viable video gaming platform. At the time, video game consoles ruled the video game markets. However, not only video game manufacturers took notice.

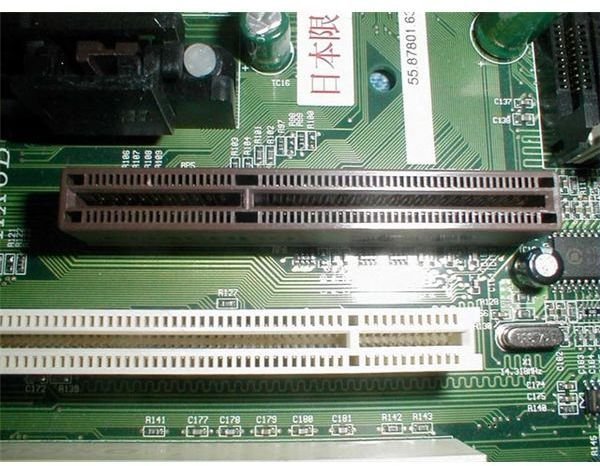

Video cards paved the way for graphical user interface operating systems such as Microsoft Windows and made possible business applications such as full-featured word processors and spreadsheets. Some of the earliest video cards plugged in to ISA motherboard slots and later to the higher bandwidths offered by PCI ports.

The introduction of the AGP port was perhaps one of the biggest innovations in the history of the video card. Finally, a computer’s graphics card had a dedicated video port with the highest bandwidth available to date.

The Evolution of the Modern Video Cards

Today, PCI Express video slots allow multiple video card arrangements allowing two or more cards to shoulder the task of processing the computer’s video before sending it out to the monitor.

The modern video card market has turned into a two-horse race between nVidia and the former king of video cards, ATI. Founded in 1993, nVidia hit the video card market running with the introduction of a new line of graphics cards. At the time, ATI was the only real contender in the computer graphics market and some say that the company was complacent about holding on to its title essentially giving nVidia some opportunities it shouldn’t have had. Regardless of the history of these two companies, it is clear that competition is fierce between ATI and nVidia as evidenced by the constant release of new video cards with promises that they are the fastest cards on the market.

With the recent release of Windows 7 and DirectX 11, ATI and nVidia are once again vying for first place in the minds of the consumers. It seems unlikely that another video card manufacturer will compete directly against these two giants in the near future. ATI and nVidia’s rivalry looks set to continue.

Conclusion

From humble beginnings, the video card industry has risen to be one of the most visible in the home computer market. What was once a stable element in the home computer is now one of the most popular upgrades. The rivalry between ATI and nVidia has created a two-horse race in the industry with little room for number three and below. In the near future, it is unlikely that any company will unseat either of these two giants from their position as the two most influential video card manufacturers.

This post is part of the series: The Evolution of the Video and Sound Card

These two articles discuss the brief history and evolution of two of the most upgraded components in the modern computer. The first article looks at the video card’s evolution while the second article tackles the sound card from its humble beginnings.