Nvidia Fermi: A Layman's Explanation of Nvidia's New Architecture

A Turn

Nvidia has been taking a beating on the desktop GPU front. AMD’s 4000 series Radeon products provided generally better performance at a lower price than what Nvidia was able to offer. More importantly, however, the Nvidia GPUs were also larger than those from AMD, which meant that Nvidia’s products cost more to produce. It was a lose-lose situation for Nvidia, as they were second best performance per dollar and they had little room to cut prices.

Things have become even more dire with the Radeon 5000 series of products. AMD has jumped even further ahead of Nvidia, with DirectX11 support and Eyefinity, which simplifies the operation of several monitors at once. The gap is now so wide that it is unclear how Nvidia could hope to keep up. And if Nvidia’s new architecture, Fermi, is any indication, Nvidia may not intend to keep up at all.

Doubled Again

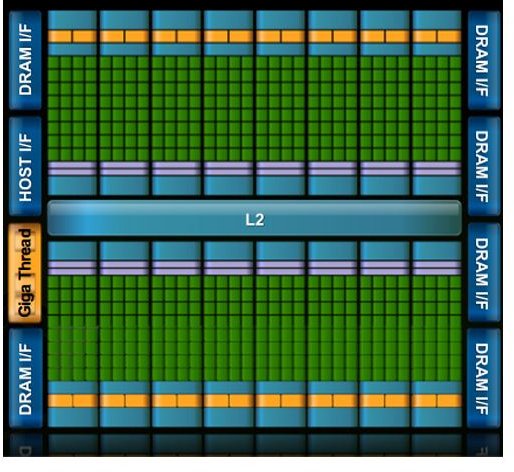

At first glance, Fermi’s advancement does not look all that different from what AMD did for the 5850 and 5870. For those products AMD doubled many resources which, in turn, nearly doubled performance. Fermi also provides more resources. The highest end GTX 200 products had 240 CUDA cores on board. Fermi increases that number to 512. In very general terms, this tells us that at similar clock speeds one would expect a Fermi GPU to be about twice as fast as current high-end Nvidia GPUs

Another broad determination of performance is memory bandwidth. Fermi offers a memory path 384 bits wide, courtesy of six 64 bit memory interfaces. This is actually down from 512 bits on most current GTX 200 series products, but the width of the path is only one factor. Fermi will use GDDR5 memory instead of the GDDR3 currently used, meaning that it moves two-thirds more data per clock for a given path width. As a result it can be expected that memory bandwidth per second will actually be higher than not only any current GTX 200 product but also AMD’s new RV870 based products.

Compute Focus

These figures are impressive, but they don’t necessarily indicate a product that will significantly outrun AMD’s products. They also do nothing to shrink Nvidia’s GPUs - in fact, the Fermi based products will yet again be the largest GPUs every made, at around 3 billion transistors. In other words, early indications are that Fermi will do nothing to increase Nvidia’s competitiveness against AMD GPUs.

At least, not in the traditional role of the GPU. While Fermi offers no surprises for those wishing to play games, those who wish to use the GPU as a general computer processor already have their eyes on Fermi. This is because Fermi offers multiple features which are focused towards advancing the performance of Nvidia GPUs in general computing situations.

Steps in this direction are the Fermi’s improvement in double-precision performance and the inclusion of EEC memory. The double-precision performance of Fermi will likely be higher than any GPU to date. This is a big deal, as double precision operations are often required in the general compute applications Nvidia is aiming for. EEC (Extended Error Correction) memory is included for the same reason. It allows for correction of errors in the memory bus, a must-have for many who are interested in using GPUs instead of CPUs in super-computers, and maybe normal ones too.

Pioneer

In fact, the orientation of Fermi towards general computing applications exists at every level. For example, Fermi is capable of parallel kernal execution. This means that the GPU will be better able to handle multiple tasks at one time, which is important in general computing. Fermi is also being designed alongside new tools which will make it very easy for programmers to take advantage of Fermi GPUs and a new ISA (instruction set architecture) which Nvidia says will fully support C++.

None of that has much to do with playing Crysis. But Nvidia is not just interested in gamers anymore. It is interested in movie studios which need to render 3D animated films as quickly as possible and in companies which need a small and relatively cheap supercomputer capable of processing huge amounts of data in parallel. This is a market that is still in many ways untapped, there being a huge gap in price and performance between a high-end workstation and low end supercomputer, and a market in which Nvidia has an edge.

What is unclear is to what extent Nvidia’s push towards serving that market will affect its support of the traditional desktop GPU market. The release of Fermi based products will be one to watch very closely, as it will determine if Nvidia is really intending to try and serve both markets or if Nvidia is planning to eventually pull out of desktop graphics altogether. No matter what Nvidia intends, the results should be interesting.